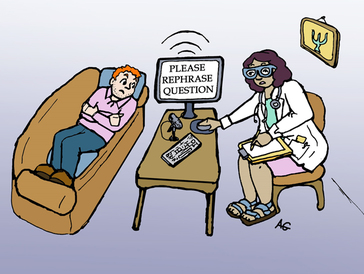

Today, we published the primary paper from our ongoing work with USC, and UW demonstrating the feasibility of automatically evaluating the quality of therapist empathy in psychotherapy (here's the link to the paper in Plos One). Here are links to an article in the L.A. Times, a story on phys.org, and a press release from UW that talks about the paper and our collaborative work with USC, and UW. Side note the L.A Times piece mistakenly states that MI isn't well researched (which is very,very incorrect), and also doesn't mention that the paper was co-led by folks here in Utah (and funded partly by a grant based here at UU). I guess this is what people talk about re: the slings and arrows of news media and science. Update: Here's another article in the Washington Post, and another in Neuroscience News. A recent blog post by Tom Insel, cited a paper resulting from our labs collaborative work on utilizing machine learning tools to evaluate psychotherapy process.

Stefan Hofmann & Joshua Curtiss recently reviewed the 2nd edition of The Great Psychotherapy Debate that I co-authored with Bruce Wampold. I appreciate the authors willingness to engage with some of the arguments in the book. However, there were multiple instances where the review either misunderstands key premises, or simply fails to contend with core arguments. In the hopes that interested readers wading into this research area won’t come away with mistaken ideas about key concepts, I have a few comments:

Misunderstanding the Medical Model. According to Hofmann & Curtiss, the Contextual-Medical Model distinction is flawed because it holds that Medical Model based treatments must have some underlying biological explanation vs. Contextual Model treatments that are presumably more psychologically based. This biologically based view of the Medical Model in psychotherapy represents a fundamental misunderstanding of a primary premise of the book. The Medical Model of psychotherapy does not depend on biological reductionism. Indeed, both standard explanations of how CBT works and common factors explanations of psychotherapy can accommodate a biological level of analysis (e.g., social neuroscience of empathy, placebo/expectancy research on endogenous opioids, emotion regulation strategies). Psychodynamic theory fits the Medical Model of psychotherapy just as well as CBT (as does EMDR – to which we will return below). The key issue for the Medical vs. Contextual Model distinction is that a Medical Model of psychotherapy holds that psychotherapies work the way they say they do. Specifically, the Medical Model assumes:

The review also criticizes the Medical vs. Contextual Model distinction as “allowing for” EMDR (the claim that the Contextual Model somehow endorses ‘Thought Field Therapy’ isn’t even worth a response). Although it is not clear what the reviewers mean by “allowing for” EMDR, I reject the premise that the Contextual Model somehow advocates for specific treatments that are not currently within the mainstream of clinical science. What the Contextual Model offers is an explanation for why treatments that many believe are based on pseudo-science might actually help clients just as much as the most respected interventions psychology has to offer. What I find concerning is why advocates of CBT do not find the failure of their treatments to “beat” less scientific interventions cause for re-thinking their explanations for how treatments work (e.g., Why is it that EMDR and Present Centered Therapy work as well or almost as well as exposure based interventions? This is a question we have raised in several published articles and have yet to see a direct response). In the case of EMDR, a usual retort is that it is basically exposure with some finger wagging. However, by all reports the exposures in EMDR are fairly milquetoast compared to what we are trained to do in Prolonged Exposure. This is fairly problematic for the standard explanation of how exposure based treatments work, no? In sum, the Contextual Model no more advocates for EMDR than it does CBT. It simply provides a parsimonious explanation for why treatments that are based on flawed psychological principles are nevertheless sometimes quite helpful to patients. More personally, I regularly discuss EMDR as an example of pseudo-science with my students and would not advocate they get trained in the intervention as I believe it diminishes the credibility of psychology as a modern scientific enterprise. However, I also discuss its frankly shocking popularity as an example of the failure for treatment developers to consider that patients are not aplysia who can simply be strapped in for habituation protocols, but human beings who want psychological treatments that appeal to who they are and what they care about. CBT is great, but evidence for treatment specificity really is lacking. At this point I would not think such a claim is controversial. Indeed, a recent article in Nature made similar claims. However, the review simply states as obvious that CBT shows strong evidence of specificity (as an aside, the review confuses disorder specificity – that a treatment targeting panic symptoms has a larger effect on panic than on depression – with treatment specificity – that a treatment works through targeted pathways). Specificity, what it is, and how it can be demonstrated is a central theme of the book. Hofmann & Curtiss’s review fails to contend with any of it. As an example, consider psychotherapy placebos – what in the book we call pseudo-placebos. I agree that CBT and most ‘bona fide’ interventions beat these placebos. However, there is a section of the book on problems with placebo controlled trials in psychotherapy, and why they do not and really cannot provide solid evidence for specificity in psychotherapy (e.g., the lack of the double blind, failure to control for all common factors). What is amazing is how effective many of the sham psychotherapies actually are. Hofmann & Curtiss must disagree with some aspect of this argument, but they fail to state what they find problematic. Hofmann & Curtiss also fail to cite or even acknowledge the mountain of evidence that stands in direct contradiction to claims of the relative superiority of CBT over bona fide alternatives. For example, the review cites a 2010 meta-analysis by David Tolin as providing evidence that CBT is more effective than alternatives (notably this review treats EMDR as a CBT – raising questions about who exactly is advocating for pseudo-science). Briefly, the cited meta-analysis is part of a long (and slightly infuriating, if only in the friendly ‘oh my gosh’ here we go again, sort of way), long, long, long, back and forth on whether or not CBT like interventions out-perform alternatives. Not surprisingly, we go into this quite a bit in the book, but Hofmann and Curtiss just cite one paper as if it provides definitive evidence and ignore prior and subsequent papers that raise direct questions about its conclusions. I would note that Tolin recently published a correction to his 2014 update on the general topic. We certainly all make mistakes (I certainly have, and will in the future). However, the error in Tolin’s 2014 meta-analysis fundamentally altered many of the results reported in the paper and should have led either to a retraction or a fundamental reshaping of the conclusions. My view is that that the decades long scrum over CBT vs. alternatives has most recently resulted in a big sigh……… There is not clear meta-analytic evidence that CBT is more effective than bona fide alternatives (this ignores the question of whether there is still an intellectually satisfying definition of what exactly CBT is). There are recent interesting examples on both sides of this question (e.g., long-term psychoanalysis getting demolished by CBT in bulimia, while time-limited dynamic therapy did quite well in comparison to CBT for anorexia, or the recent finding on IPT being equivalent to Prolonged Exposure in PTSD). However, these single studies raise as many questions as they answer (e.g., did CBT beat psychoanalysis b/c analytic theory is flawed or b/c it was an unfocused, unstructured, newly developed version of the treatment) and await further study, does IPT truly not include exposure, or is exposure of the kind specified in "Prolonged Exposure" not really necessary for the required psychological process to occur?). On that topic, I believe the tools needed to conduct detailed, large scale tests of mechanism in psychotherapy do not currently exist (which is one area I am working on with my colleagues quite actively – stay tuned…). The contextual model could be rejected – it is falsifiable. Hofmann & Curtiss argue that the Contextual Model is poorly specified and not falsifiable. They provide no specific rationale or examples for why this is the case, and simply state the constructs are vague and that there are no critical tests that could confirm or refute it. I have seen this argument pop up in a few different places recently among CBT advocates, and must admit that I find it baffling. I would be the first to admit that any number of constructs in the Contextual Model could and should be better specified. However, the psychological processes upon which the Contextual Model depends are among the most studied we have in psychology – empathy, expectancy, etc. The book is specifically organized as an outline of predictions that stem from either the Medical or Contextual Model! These predictions are in fact quite testable; they could result in evidence that supports one model or the other. Meta-analyses could provide consistent evidence of treatment differences or not, adherence could be correlated with treatment outcomes or not, the alliance outcome correlation could be zero or not, etc. Hofmann and Curtiss would likely disagree with our particular take on this evidence, but the contention that the predictions of the Contextual Model are not testable is hard to follow and slightly bizarre. The debate is not a distraction - it is simply an attempt to make sense of what psychotherapy researchers have been up to for the past several decades. Hofmann & Curtiss argue that in the era of neuroscience and reduced resources for psychotherapy research, we should stop quibbling amongst ourselves, and fight back against approaching biological reductionism. To do so, we should work on the integration of neuroscience into psychotherapy research. However, a deeper exploration of specificity and common factors is not at all inconsistent with this integration (for example, see a recent cool paper looking at the effect of expectancy in meditation). Certainly, innovation in psychotherapy research is needed, and another decade or two of moderately sized RCT’s comparing Treatment A to Treatment B for disorder X,Y,Z in setting D,E,F may not have a dramatic impact on the burden of mental health disorders (i.e., the green eggs and ham approach to treatment research – would you like CBT in a box, would you like it with a fox, No?, what about ACT, would you like ACT?). Given the historical focus on clinical trials in psychotherapy research, it would be odd for scientists not to make attempts to synthesize this evidence and theorize about what it means. The lack of clear winners in this horse race approach to psychotherapy research has perhaps resulted in folks at NIMH losing interest in this particular strain of work and instead going for the moon shot of just figuring out how the brain works. It is important for psychotherapy science to contend with realities at NIMH, but there is no reason for psychotherapy research to turn away from the tension between specificity and common factors. The common factors/specificity dialectic is essentially about treatment mechanism -- how do we understand the interplay of relationship and technique in psychotherapy? It remains a useful framework for interpreting the evidence and generating testable questions. At the same time, any framework can be an over simplification, and work at the margins of the debate has particular merit. For example, how does exposure happen in the context of the emotionally laden therapeutic relationship given current research on interpersonal emotional regulation. Yet, it would seem odd in the era of whacky (but fun!) neuroscience on things like “brain to brain coupling” for psychotherapy researchers to avoid questions related to how the therapeutic relationship targets client distress. Hofmann and Curtiss state, of course, the relationship is important, and of course, CBTers care about such things. Certainly, CBT researchers have made primary contributions to our understanding of the common factors, and their methodological critiques have inspired important innovations in research on the therapeutic relationship. Yet Hofmann and Curtiss’ perspective seems to de-emphasize research on how common factors work – that somehow common factors research is the antithesis of detailed mechanism research. This perspective is perhaps similar to that of drug researchers who believe the placebo effect is real, but don’t have much interest in understanding it in detail. In contrast, we argue the evidence suggests the impact of psychotherapy on the disease burden of mental health problems will depend on making scientific progress related to the common factors. In sum, rather than compliment the quality of the writing in the book, we would prefer the reviewers criticize (even harshly) the actual arguments. It is not helpful to dismiss or ignore tough findings about which treatments work - and why - in hopes of taking on the neuroscience bogeyman. Instead, psychotherapy researchers should dive further into questions the debate poses, doing the hard work of developing and testing novel predictions. -Zac Imel The Lab is gearing up for the Fall 2015-Spring 2016 school year. This includes setting up our new lab space, creating and revisiting research projects, and last but not least welcoming our two new lab members! Kritzia and Derek are gearing up for the semester, and we're excited to see what new energy they bring to the group. Welcome to Utah!

Our amazing rising 3rd year students are taking their preliminary exams over the next two weekends and we wish them the best of luck!

Monday Feb. 23rd was our official PhD applicant interview day for the Fall 2015 Counseling Psychology cohort. Thanks so much to all of you for taking the time, and traveling to the U. It was great meeting and getting to know you a little bit, and we wish you the best of luck as you finish out the application process!

|

AuthorThe Laboratory for Psychotherapy Science Archives

August 2019

Categories |

RSS Feed

RSS Feed